Landscape, Notable Players, and Trends in Cloud, SaaS- Part 1

Summary

We review cloud computing, the SaaS landscape, and the DevOps trends.

We attempt to add some historical context whilst also reviewing leading public stocks and promising startups.

In Part 1 we cover cloud computing, rising stars in Kubernetes and serverless, and the SaaS landscape.

In Part 2 we’ll cover DevOps, some of the leading names, and also discuss the security side.

Key Takeaways

The insatiable appetite for greater economies of scale is the fundamental catalyst that has driven the IT industry forward, both before and during the cloud computing evolution.

Increasing levels of abstractions in the cloud era have made application hosts more compact, portable, and cost effective. This has enabled developers to deliver even greater scale economies for their orgs.

The ceiling has not been reached yet, however. More startups and technologies emerge that relieve developers from the onerous duties associated with provisioning the back-end infrastructure. This allows developers to focus more on creating differentiated value at the front-end of development, and gives operations more scope for further scaling.

Thanks to the abstractions, developers and IT operations can now build resilient and high-performing applications. The downside is that the increase in complexity is extremely challenging to manage and makes orgs more vulnerable to cyberattacks.

A philosophical approach to better manage the complexity involves closer collaboration between developers, operations (i.e., DevOps), and security (i.e., SecDevOps).

As teams pursue DevOps/SecDevOps, there has been rising demand for SaaS vendors that have the software that can help. It may be the case that such vendors, that can bring these ideals to fruition, will be the players that generate the most incremental value for orgs operating within the clouds during the next few years.

The broader SaaS landscape has become so fragmented that the typical org now has 100+ different apps to manage. Hence to drive the next wave of value for orgs, a degree of consolidation feels paramount.

Taking the previous points into account, long-term investors should consider SaaS vendors 1) that can further unburden developers from the back-end plumbing involved in building applications, 2) that are quickly expanding their platforms to smother out the competition, 3) that can empower IT teams to seamlessly bring dev, ops, sec to work together with more transparency and understanding, 4) that have an organic bottom-up adoption within the DevOps community, and 5) that can help developers be speedier and more creative at the front-end of development. Note, this is not a checklist, just some considerations.

Ultimately, consider any vendor that enables orgs to operate in the cloud with better security, improved quality, and faster time-to-market.

Overview

Cloud computing delivers the many products we’re familiar with today, such as, ecommerce, social media, and video streaming services. For instance, Shopify and Snapchat are big spenders on GCP (Google’s Cloud), and Netflix has a big budget for AWS (Amazon’s Cloud).

Whether these products are software you consume via a browser on your desktop, or via an app on your smartphone, they will generally fall under the category of SaaS (Software-as-a-Service). SaaS has become widely prevalent because it delivers benefits to both end users and application owners. End users (consumers or businesses) avoid having to install/manage applications on their desktop, or only need to install lightweight apps on their smartphone, which saves time and allows the device to perform better. And application owners can deliver better service while lowering their costs as their SaaS app is hosted in the cloud.

Cloud computing not only enables end users and orgs to consume software more conveniently, it also facilitates developers and orgs to build and deploy software more conveniently. The idea of utilizing the cloud with best practices in software development and IT operations is called DevOps. It is the philosophy instilled in DevOps that has powered the rapid feature and product innovation by the top software firms in recent memory.

In this report we breakdown cloud computing into SaaS (end users and orgs that consume software from the cloud) and DevOps (developers and orgs that build and deploy software in the cloud), as this pretty much covers what clouds are used for.

Firstly, we'll cover the journey to cloud computing, and then how it has evolved over the past 16 years, which should lay a foundation for understanding its current state and for considering its future.

Cloud Computing

Journey to the Cloud

The drive to gain greater economies of scale has been the primary catalyst for the computer industry evolving to the cloud. From the 1950s through to the 1990s, computing architecture shifted from the mainframe, to the client-server model, and then to data centres, each delivering a step-up in economy by increasing both the number of clients that a server can serve, and the number of servers bunched together.

In the 1990s, improvements in networking protocols, computing power, and data compression, made it possible to efficiently transmit data back and forth from/to a data centre that might have been a fair distance away from end users. This led to corporations building large data centres to house thousands of servers to gain the maximum scale economies possible.

In 2006, the computer industry witnessed the birth of cloud computing when Amazon unveiled AWS. In simplistic terms, AWS and other hyperscalers, provide gigantic data centres to drive further scale economies. And ever since the server was decoupled from the client in order to serve multiple clients, the primary way to bring greater efficiencies is to put more and more servers together – eventually leading to hyperscale environments known as clouds.

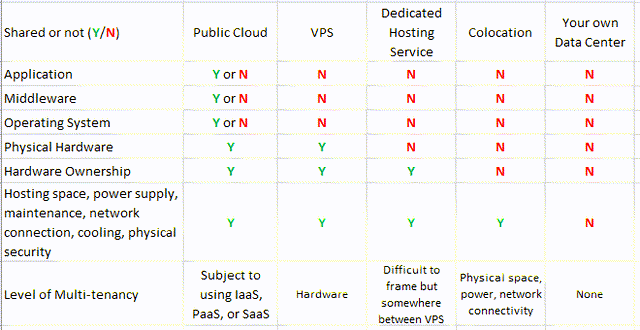

In essence, it is the sharing of resources that drives the economies: multiple clients share one server, multiple departments sharing one data centre, multiple companies sharing one cloud. The distinguishing characteristic of the latter being that it is a business model rather than an internal benefit for a corporation. Earlier versions of turning computer resource sharing into a business model (around 1990s to mid-2000s) include VPS (Virtual Private Server), Dedicated Hosting Service, and Colocation. Here we outline the differences among these services with respect to where the sharing of resources occurs.

Source: Convequity

Th closest predecessor to cloud computing was VPS, which became a very popular market in c. 2000 when VMware released its first hypervisor, ESXi. This allows operators to buy hypervisor software licenses and virtualize (partition) the physical machine into scalable pieces of instances, known as VMs (Virtual Machines). This delivered even greater economy because multiple VMs can be hosted on one server, and one VM can serve multiple clients.

At its core, AWS’ first major product, EC2, is based on this model of compute, but Amazon has made numerous innovations to make it more suitable for modern-day digital infrastructure operations. Firstly, EC2 elevated the concept of multitenancy - enabling different customer orgs to share computing resources - which generated additional efficiencies and essentially made cloud computing highly lucrative for the hyperscalers.

Most notably, EC2 provides mission-critical features not available with VPS. These include optimized variants (choice of instance type – optimized for compute, storage, memory, or general purpose), high availability (hardware redundancy to ensure 99.99% system uptime), data durability (ensuring access to undegraded data), high scalability, on-demand pricing, and high connectivity. In essence, EC2 delivers so much more than just the scalability provided by resource sharing.

The key consideration is that EC2 instances run on top of a cluster of servers interconnected and virtualized - hardware failure of one physical server won't significantly impact the availability of operations. With internal (east-west) hyperconnectivity, developers can also utilize more sophisticated design and topology. As such, EC2 has evolved VPS into Virtual Private Clouds, hence the product name Elastic Compute Cloud (EC2).

These attributes provided by EC2 serve as the backbone for DevOps to deliver the required business agility to their orgs. It enables them to innovate faster, bring applications to the market quicker, scale capacity to meet demand more efficiently, and build applications with high levels of resiliency.

Evolution of the Cloud

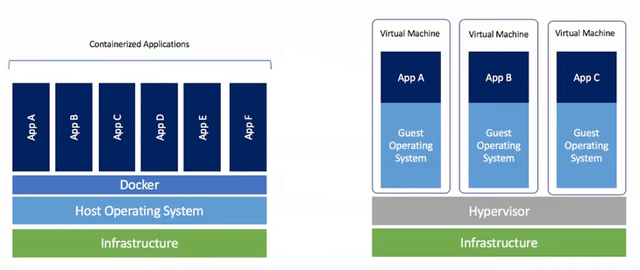

The pursuit for greater economies of scale continues. VMs share the same hardware but not OS kernel. Hence, in the early cloud days, developers would need a full OS to themselves just to host a lightweight application or to host a microservice, generating unwanted overheads.

Ultimately, developers care about applications only. Therefore, there have been multiple attempts to further knock down the overheads to achieve greater economic gains.

Containers

A major milestone was the launch of Docker in 2013. Docker introduced containers to the public market that package together all the dependencies needed to run an application, and nothing more. As a result, each container only uses the bare minimum resources it needs from the OS, which allows the OS to be shared by potentially hundreds of containers. And allowing devs to gain access to just the OS resources they need for their application rather than needing to manage the entire OS, is what greatly reduces the overheads.

The compactness also makes containers very portable, which has been great for devs to build robust applications. Applications are broken down into numerous components within a microservices architecture that makes updates/upgrades more manageable and the application more resilient and highly available.

Source: sdxcentral.com

Kubernetes

Not long after Docker and containers were introduced, Kubernetes (K8s) came along. Docker kicked off the initial buzz, but container technology wasn't yet mature enough for mass migration. The main impediment is that you need to build a complex orchestration layer to manage the running and closing of thousands of containers efficiently and securely.

In 2014, three Google engineers who built Kubernetes (K8S), through lengthy talks with the seniors, successfully turned K8s into an open-source project.

K8s, thanks to its massive deployment in Google for many years, offers public developers an actual orchestration tool to manage containers in production environments. Due to its open-source and Google origin, K8s is not a typical software wheel you can download, plug in and play. There are tons of workarounds and custom coding that requires a large talented IT team to make K8s into the actual production. Hence, it pushed some cloud players to offer KaaS (Kubernetes-as-a-Service), which provide K8s in a production-ready format. DevOps teams could quickly get started with a control plane interface to better manage and monitor things, automate things, and also build in best practices for security.

K8s vendors

Hyperscalers & Incumbents

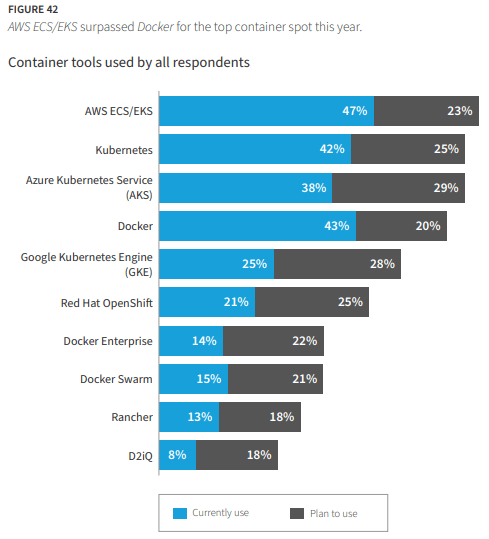

The dominant KaaS names are the CSPs – EKS (Elastic Kubernetes Service) by AWS, GKE (Google Kubernetes Engine) by Google, and AKS (Azure Kubernetes Service) by Microsoft Azure. There are also multi-cloud KaaS services such as OpenShift by Red Hat and Tanzu by VMware that provide the benefit of avoiding vendor lock-in with the CSPs. KaaS is quickly rising in popularity because it saves DevOps from having to do tricky integrations, autoscaling tasks, updates, configurations, and cluster and API management, and thus allows them to focus on more value-generating activities whilst saving orgs costs in the process. This slide from Flexera’s State of the Cloud Report for 2022, gives an insight into the adoption of managed options for containers/Kubernetes (based on 753 respondents).

Source: flexera.com - page 52